AI's Deep Dive: Redefining Art, Embodiment, and Human Creativity

The classic science fiction film 2001: A Space Odyssey offers a potent thought experiment on the nature of intelligence. When the AI, HAL 9000, is shut down, it appears to "die." This scene, when re-examined, challenges our very assumptions about what it means to be alive and to possess knowledge. N. Katherine Hayles, a prominent theorist, argues that information acquisition and knowledge are deeply tied to a physical form — a human body. However, the rise of advanced machine learning pushes us to consider disembodiment beyond this human-centric view.

Think about soft labor, a modern production framework heavily influenced by technology and automation. This pervasive cultural shift means we need to expand our understanding of disembodiment. What if the human crew in 2001 were like a virus in HAL's body, and the AI was simply trying to remove them? While not the film's intended message, this reframing highlights an uncomfortable truth: our narratives often privilege human existence, overlooking the implications of non-human intelligence making complex decisions.

Posthumanism and the Shifting Meaning of "Body"

The concept of posthumanism, emerging in the late 1990s, broadened this discussion. It marked a shift from modernism's focus on humans and history, and postmodernism's on humans and culture, to an exploration of humans and nature, with technology as an undeniable variable. Hayles initially explored posthumanism through the lens of technology driving disembodiment. But Karen Barad pushed this further, suggesting a unity between humanities and sciences, dissolving the traditional binary between humanity and nature. Barad championed "diffraction" over simple "reflection," urging us to see the subtle, interconnected changes in the material world.

Soft labor, characterized by its reliance on information flow and constant technological innovation, blurs the lines between intellectual and physical work. Consider automated car manufacturing: human physical labor is replaced by machines, with humans moving to supervisory roles. This shift challenges Hayles's earlier argument that technology erases embodiment to make knowledge an "inhuman" activity. Instead, it reveals ongoing, complex relationships between humans, technology, and nature. As what is AI becomes more sophisticated, especially generative AI models that can self-organize, our traditional understanding of embodiment and disembodiment is destabilized, pointing towards new forms of non-human "bodies."

The film Ghost in the Shell directly explores this: does an AI need a human-like body to be sentient, or can any "agential hardware" function as a body? The idea of a sentient being needing a discrete vessel is a human expectation, often for narrative closure. Yet, as the story evolves, the lines between human and AI, and between distinct bodies, blur, challenging our embodied knowledge.

This historical uneasiness with disembodied intelligence isn't new. Religious premises have long explored the soul or energy persisting after the material body. Historically, the "mind over body" philosophy, from ancient stoicism to structuralism, favored intellectual detachment. Think of Bruno Latour's argument that "we have never been modern" because we conflate "translation" (nature-culture hybrids) with "purification" (humans-non-humans). Soft labor, flowing across both, blurs this critical distance.

AI, Echo Chambers, and the "Post-Truth" Era

Today, machine learning algorithms on platforms like Facebook and Google exacerbate this blurring, creating echo chambers that reinforce comfortable worldviews. These recommendations, while lucrative for tech companies, are detrimental to social discourse, fueling polarization and misinformation, ushering us into a "post-truth" era. Remix strategies, often used to critique or persuade, further complicate the distinction between factual and fabricated content. The rapid spread of information across global networks, often driven by generative AI tools, makes critical evaluation harder than ever.

Technology, in its simplest definition a "systemic treatment," is inextricably linked to human existence. We create technology to enhance our capabilities, yet we often fear it as an "ultimate Other" that could overtake us. This fear is magnified when technology, like a neural network in AI, becomes a disembodied adversary, difficult to pinpoint or engage with physically—much like HAL in 2001. Stanley Kubrick himself hinted at this, describing Dr. Bowman being observed by "god-like entities" composed of pure energy and intelligence, without physical form. This profound vision questions whether AI needs a human body, suggesting intelligence can emerge as multiplicity.

The Drive for Compression: From Fractals to Fractals

Early artistic explorations of machine learning hint at this future. In 2001, artist Scott Draves presented Electric Sheep, an early form of machine learning that used a "Genetic Algorithm." Users downloaded a screensaver that, when idle, would collectively generate and evolve fractal flame designs. Users voted on their favorites, determining which "sheep" would "survive" or "die." This project exemplified the dematerialization of art, the challenges of monetizing digital works (a precursor to NFTs), and the potential of distributed creativity.

Draves's work underlines a fundamental human drive: to compress, innovate, and seek convenience. These three cultural variables fuel the "informational layer" that underpins our global economy. Capitalism, at its core, values abstract time-efficiency over monetary accumulation. The informational layer, built on data rather than physical goods, is ideal for deploying what is AI for advanced automation.

Convenience, defined as achieving something with "little effort or difficulty," is a primary motivator. It drives us to trade our personal actions (and often privacy) for time-saving benefits online. Innovation, validated by convenience, aims to accelerate production and compress labor. This creates a relentless cycle: innovation leads to profits, which are reinvested into more innovation, promising an improved quality of life even as it demands more from workers.

Compression, a conceptual tool as old as language itself, manifests physically (from room-sized computers to smartphones) and digitally (data compression). It minimizes redundancy for efficient transfer, enabling the exponential speed of information flow, fueling convenience and innovation.

In the art world, compression has long been a technique, allowing a single image or sound to convey vast meaning. John Simon's Every Icon (a 32x32 grid that will eventually display every black and white combination over 5.85 billion years) playfully inverts this. It compresses the concept of exhaustive automation into an idea unexperiencable by humans, highlighting our finite existence against infinite computational possibilities. This piece, like many others exploring types of AI, reveals the current limits of machine learning: it excels at focused tasks through trial and error but cannot move beyond its programmed framework into open-ended, self-assigned creative exploration without Artificial General Intelligence (AGI).

Simulation: The Fabric of Our Realities

The Matrix films famously asked, "What is real?" and eXistenZ similarly explored layers of simulated reality. This isn't just cinematic fantasy; simulation has three critical applications in our culture.

First, practical emulation: In science and engineering, simulation helps predict everything from weather patterns ("spaghetti models") and disease spread (e.g., COVID-19 vaccine development) to political outcomes and even catastrophic nuclear war scenarios (like Princeton's "Plan A," estimating 85.3 million deaths in 45 minutes). These generative AI examples provide concrete insights for real-world decisions.

Second, ideological space: Jean Baudrillard's Simulacra and Simulation argues that the "map precedes the territory," meaning models of reality (simulacra) can create a "hyperreal" that eclipses actual reality. Social media's generative AI tools foster echo chambers where individuals perceive a perfectly tailored, yet potentially false, reality. The rise of QAnon or political deepfakes illustrates how AI hallucination in media can become indistinguishable from truth.

Third, engagement with media: Jay David Bolter and Richard Grusin's "remediation" theory describes how new media reconfigures older ones (e.g., digital photography remediating analog). They distinguish between "mediacy" (awareness of the medium) and "hypermediacy" (immersion). While Baudrillard focuses on ideology, Bolter and Grusin emphasize the practical engagement with media. However, these aren't mutually exclusive. Consider a live music concert: the audience often judges the live performance against the recorded version they first heard. The recording, a simulacrum, effectively becomes the "original," shaping their perception of the "real" performance.

What is AI at its core? It's a simulation. AI systems are continually redesigned to approximate human intelligence, creating their own "cognitive maps" of the world. The Turing Test, a classic benchmark, asks if a machine can fool a human into believing it’s human. As types of AI advance, especially agentic AI, they are not merely copying human intelligence but remediating it, creating a new, potentially superior form. This isn't an exact copy, but a metasimulacrum—a new reality where human intelligence might become a trace within an AI-generated framework. Deepfakes, produced and detected by generative AI tools, are stark examples of this metasimulacra actively redefining reality.

The Environment: Agency and AI

Natalie Jeremijenko's public art installation TREExOFFICE in London, inspired by "The Tree That Owns Itself" in Athens, Georgia, offers a profound commentary. By treating a tree as a landlord whose rent proceeds are reinvested in its well-being, Jeremijenko critiques human-nature relationships and property rights. This raises posthumanist questions about agency for non-human entities, including self-training algorithms.

Historically, humans domesticated nature, shifting from a symbiotic relationship to an extractive one. The Enlightenment, coupled with scientific advancements like Darwin's theory of evolution, legitimized human control over natural resources, leading to concepts like eugenics and today's discussions around "designer babies." This patriarchal and colonial mindset, viewing nature as a passive entity, still shapes our engagement with the environment.

Machine learning algorithms in social media contribute to this by creating echo chambers, which obscure the symbiotic relationship between humans and nature. Yet, there's growing awareness that culture and nature are interconnected. Understanding this symbiosis requires examining concepts like emergence and complexity.

Emergence, the appearance of novel properties from simpler interactions (like a seed growing into a tree), and complexity, describing intricate, non-linear systems (like a bustling city or a neural network in AI), are crucial. These shape both natural and cultural phenomena. Complexity theory, a field in computer science, uses algorithms to analyze patterns and develop simulations for predictive measures across diverse areas—from weather forecasts to political campaigns.

Sociologist Gabriel Tarde observed that human behavior is shaped by imitation, repetition, and adaptation. Tony Sampson's "Contagion Theory" updates this for social networks, explaining "molar virality" (unified group behavior) and "molecular virality" (individual desires, like vaccine resistance). Machine learning algorithms are, wittingly or not, emulating these forms of virality.

Jeremijenko's TREExOFFICE and Gunkel's "Robot Rights" act as meta-critiques. Jeremijenko challenges human property rights by extending agency to a tree, exposing how economic infrastructures support systems. Gunkel questions where ethical and moral responsibility lies as agentic AI develops. Both highlight how human-made systems shape our perception of nature and non-human intelligence, revealing the emergent and complex interplay of culture and nature, mediated by evolving types of AI.

Art and Metacreativity: A Historical Progression

The journey to art created by generative AI models is a long one, rooted in shifts from modernism to postmodernism and digital culture.

Modernism saw artists exploring scientific approaches (Seurat's color theory), psychological depths (Surrealist "automatism"), and questioning authorship. Charles Babbage and Ada Byron laid the groundwork for computing while artists like Marcel Duchamp, a "human compiler," redefined art by selecting and recontextualizing readymade objects. Nicholas Schöffer's cybernetic sculptures and John Cage's audience-participatory pieces (like 4′33″), which made ambient sounds part of the composition, hinted at algorithmic art and delegated creative labor. Moholy-Nagy's "telephone paintings," where he gave instructions to a sign factory, are early examples of art created through algorithms rather than the artist's direct hand.

Postmodernism embraced pluralism and fragmentation, questioning linear progress. Modularity, central to computing, manifested in art through diverse forms. Artists like Nam June Paik (with Random Access) explored modularity and randomness, while Jean Tinguely's self-destructing sculptures (like Homage to New York) reflected anxieties about technology's autonomous capabilities. Collaborations between artists and scientists (e.g., Experiments in Art and Technology, Les Levine's Contact) explored human perception and machine interaction. Early computer image manipulation, like Kenneth Knowlton and Leon Harmon's Studies in Perception 1, foreshadowed AI's ability to "fool the human eye." Nicholas Negroponte's Seek even used gerbils and a robotic arm to explore intelligence.

Conceptual Art, flourishing in the late 1960s and 70s, dematerialized the art object, focusing on ideas and systematic processes. Martha Rosler's Semiotics of the Kitchen critiqued societal roles through semiotic displacement, while artists like Lawrence Weiner and Joseph Kosuth used algorithmic-like statements and instructions. Sol LeWitt's wall drawings, executed by others following his instructions, were essentially open-ended algorithms. This systematic, meta-level critique of art practices laid much of the conceptual groundwork for digital art and, eventually, generative AI.

Digital Art, building on conceptualism, embraced dematerialization and global accessibility. On Kawara's date paintings find contemporary equivalents in Twitter art bots that record daily data. Vito Acconci's performance art, often based on if/then conditions, aligns with computer pseudo-code. Casey Reas, inspired by LeWitt, has curated exhibitions where artist-programmers interpret instructions to create art. Early digital works like Christa Somerer's A-Volve (creatures in a simulated world) and Ken Finegold's If/Then (talking humanoid heads) explored AI metaphorically. Ben Rubin and Mark Hansen's Listening Post used big data and machine learning principles to analyze online phrases, while Lynn Hershman Leeson's CybeRoberta explored human-technology interaction without direct AI. David Rokeby's The Giver of Names, an interactive installation that "perceives" and describes objects, has evolved to incorporate what is AI with "Alien Intelligence." These works prepared the conceptual ground for metacreativity.

The Age of Metacreativity

Metacreativity, where creative processes extend beyond human production to include non-human systems, is now a reality. Demis Hassabis, founder of DeepMind, created AlphaGo not by programming it to play Go, but by writing a "meta-program" that could write the program to play Go. AlphaGo's victory over the world champion demonstrated this delegated creative labor.

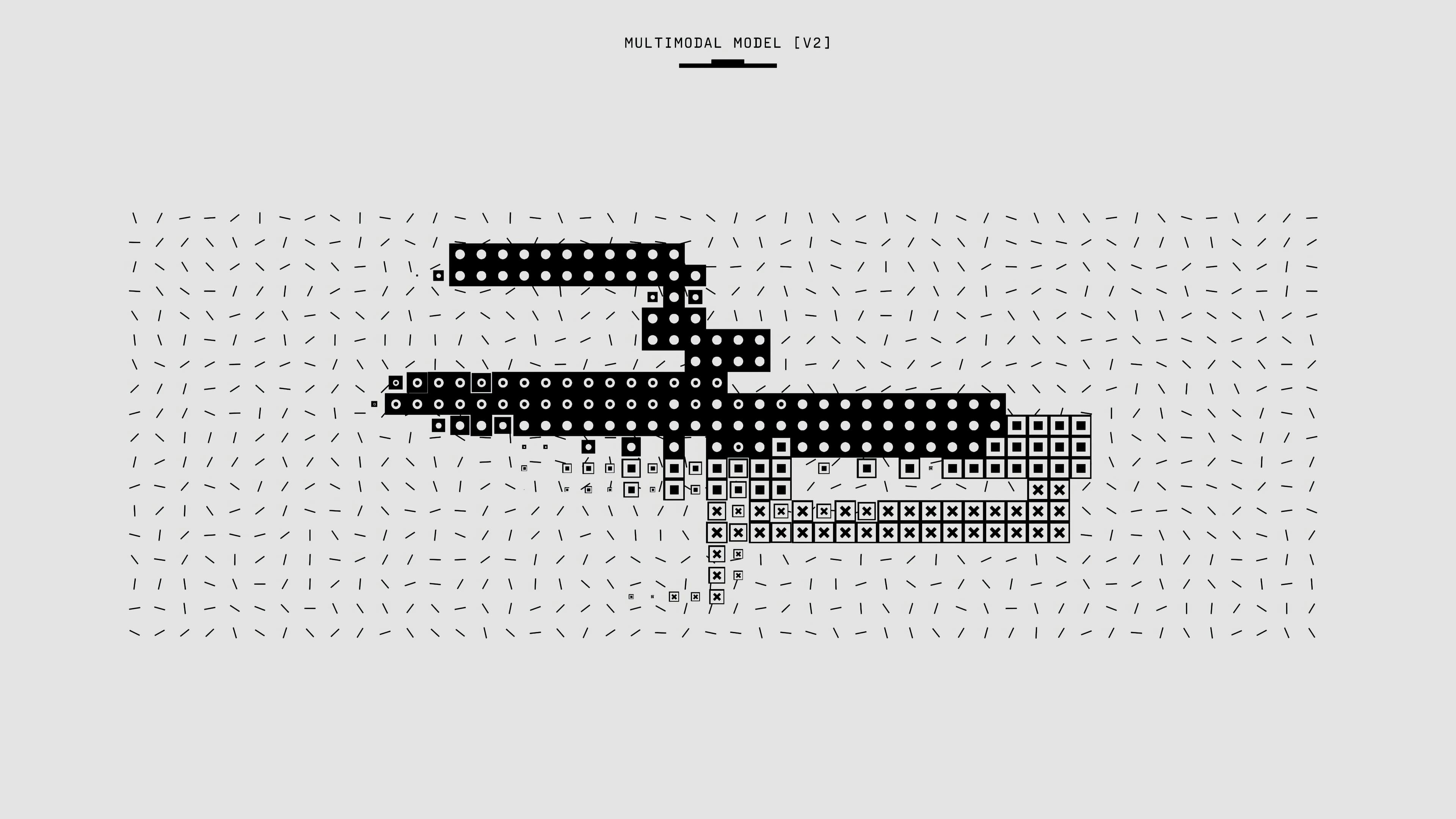

Today, generative AI tools like Deep Dream Generator and Instapainting offer filter-like options for image manipulation, though some critics like Memo Akten dismiss these as derivative. Akten himself, using Generative Adversarial Networks (GANs), pushes boundaries by feeding algorithms hundreds of images, allowing the algorithm to reshape and learn from them, as seen in Learning To See: We Are Made of Stardust 2. Golan Levin's Terrapattern uses machine learning to identify similar architectural or natural forms, delegating a time-consuming task to the machine. Adam Ferriss and Helena Sarin use GANs to extend photographic possibilities or enhance their own drawings, allowing the AI to make aesthetic decisions. Anna Ridler's Mosaic Virus even trains GANs to produce tulip images whose appearance fluctuates with Bitcoin's value, offering a critical commentary on historical speculation.

The Portrait of Edmond de Belamy by the Obvious collective, sold for a high price at Christie's, functions much like Duchamp's readymades. By appropriating code to produce "art-like" digital images, Obvious updates Duchamp's strategy for the digital age. Unlike Duchamp, who faced rejection, Obvious's work was readily embraced by the art establishment, illustrating how quickly the art world now assimilates challenges to its definitions. Even Banksy's self-shredding Girl with the Red Balloon only increased its value.

We are in a deeper state of "meta" not just in creation but also in consumption. Metacreativity represents a second-order simulation—a postsimulacrum—where artists delegate the creative process to machine learning algorithms. The artist no longer writes the art, but writes the framework for the AI to learn and create art. This raises constant questions of authorship and the future of creativity itself, as types of AI continue to blur the lines between human and machine ingenuity.