Notion AI vs. ClickUp Brain vs. Monday AI: The 2025 Project Automation Scorecard

The Fragmentation Crisis Why We Are Drowning in Context

The average knowledge worker doesn't have a task management problem. They have a context retrieval problem. According to operational research, teams spend approximately 20% of their workday hunting for information that already exists somewhere in their digital ecosystem. That's one full day per week lost to digital archaeology.

The issue isn't the volume of work but the cognitive overhead of remembering where information lives. Was that decision documented in Slack, buried in a Google Doc comment thread, mentioned during a Zoom call, or captured in a task description? Each context switch costs roughly 23 minutes of productive time while your brain reconstructs the mental model of what you were working on.

This is where AI-integrated project management platforms promise salvation. But most organizations make a critical mistake: they evaluate these tools based on their ability to "write better emails" or "summarize meeting notes." Those are parlor tricks. The real operational value lies in three core capabilities that barely get mentioned in marketing materials.

First, semantic search latency: how quickly can the AI surface the right context from your entire organizational knowledge graph when you ask a natural language question? Second, cross-object context awareness: can the AI understand relationships between a task comment from three months ago, a related document, and a Slack thread? Third, property autofill accuracy: can the AI actually structure unstructured data without requiring human review of every output?

These aren't features. They're the difference between an AI that saves you 30 seconds and an AI that eliminates entire categories of busywork. The three platforms we're examining—Notion AI, ClickUp Brain, and Monday AI—each approach this problem from fundamentally different architectural philosophies. Understanding these differences is critical because choosing the wrong one doesn't just waste money. It institutionalizes the wrong operational patterns for years.

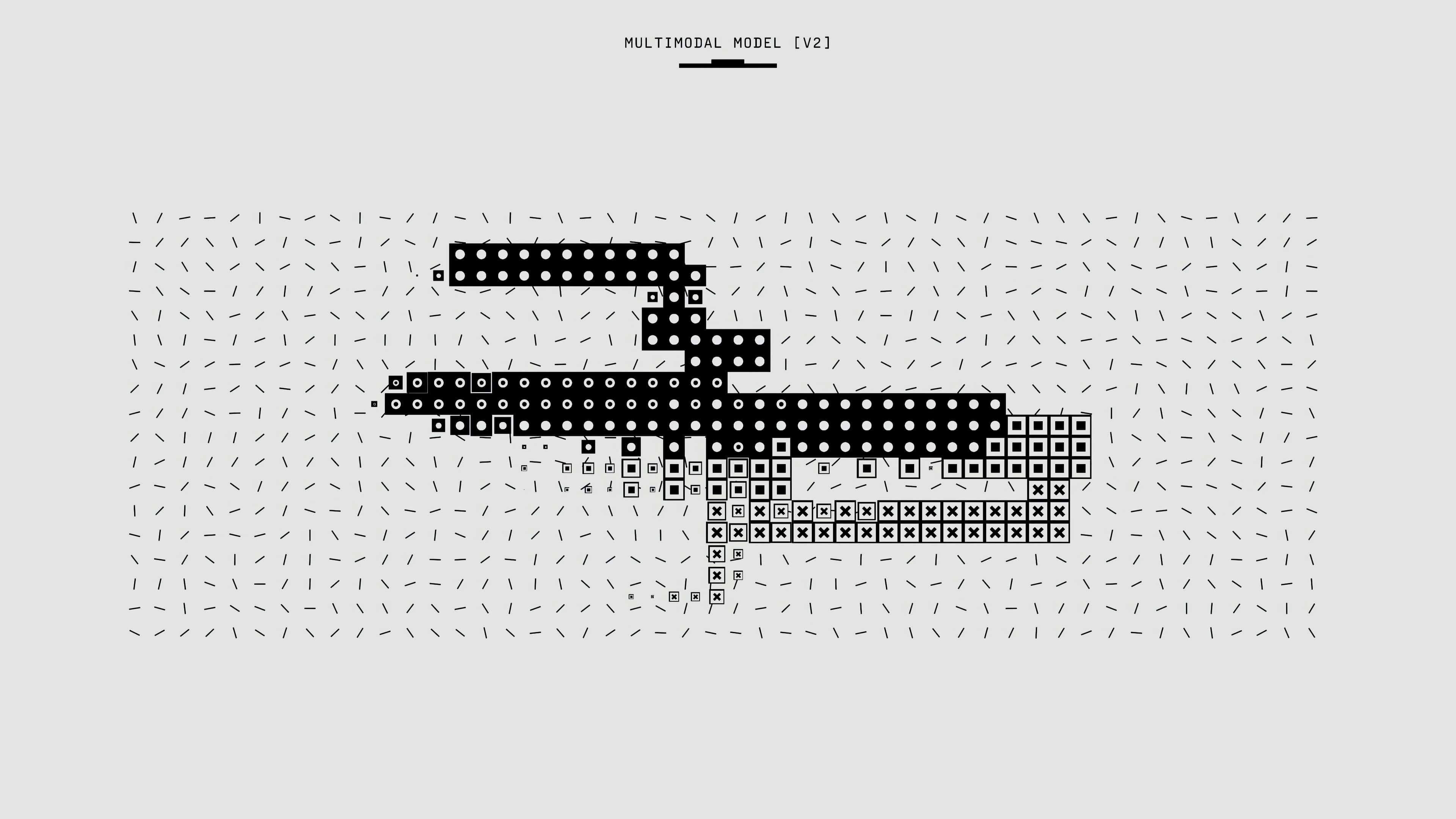

Notion recently rebuilt its entire AI architecture from the ground up with autonomous agents that can work for up to 20 minutes across hundreds of pages simultaneously, positioning itself as the "Wiki Brain." ClickUp Brain bills itself as "the first AI neural network for work," designed to pull insights from tasks, docs, and projects across your entire workspace, functioning as the "Neural Connector." Monday.com introduced monday magic in July 2025, which uses natural language processing to interpret workflow requirements and automatically generates complete, functional workspaces, establishing itself as the "Task Architect."

The question isn't which AI writes the prettiest prose. The question is: which AI understands how your organization actually works and can automate the logistics of keeping projects moving without constant human intervention?

The Project Automation Scorecard

| Capability | Notion AI | ClickUp Brain | Monday AI |

|---|---|---|---|

| Context Retrieval Accuracy | ★★★★★ Excels at finding information buried in nested documents and wikis. Cross-workspace Q&A searches hundreds of pages. | ★★★★★ Best-in-class cross-object search. Queries span tasks, docs, comments, and 15+ integrated apps simultaneously. | ★★★☆☆ Strong within structured boards. Limited depth in document synthesis. Better at data extraction than conceptual answers. |

| Automated Status Reporting | ★★★☆☆ Agent-generated reports pull from docs and databases. Requires manual database setup. No native standup templates. | ★★★★★ Automated daily standups, sprint recaps, and stakeholder updates. AI Project Manager generates progress reports from task activity. | ★★★★☆ Excellent board-level summaries and visual dashboards. AI identifies bottlenecks and at-risk projects automatically. |

| Task Generation Granularity | ★★★★☆ Agents create detailed multi-step workflows with dependencies. Strong at breaking down complex projects into actionable databases. | ★★★★★ Generates subtasks with assignees, due dates, and priority levels from single prompts. Best for rapid project scaffolding. | ★★★★★ monday magic excels at turning messy requirements into fully structured boards with automations, forms, and dashboards built in. |

| Implementation Friction | ★★☆☆☆ Steep learning curve. Requires understanding of database architecture. Power users thrive; casual users struggle with blank canvas. | ★★★☆☆ Feature density creates initial overwhelm. However, ClickUp University and contextual AI reduce onboarding time significantly. | ★★★★☆ Visual interface is immediately intuitive. AI-generated workspace templates eliminate setup paralysis. Fastest time-to-value. |

| Pricing Model | AI bundled in Business ($15/user/mo) and Enterprise only. New Free/Plus users have no AI access. Agents included with Business tier. | $9/user/mo for AI Standard (unlimited essentials, standard automations). $28/user/mo for AI Autopilot (unlimited agents, Enterprise Search). | AI capabilities included in all paid tiers starting at Standard ($12/user/mo). Trial credits provided; additional credits purchased as needed. |

Key Insight: Notion AI Agents can autonomously execute 20-plus minutes of complex work across hundreds of pages simultaneously, with AI Custom Autofill Properties that continuously evolve as content changes. ClickUp's Autopilot Agents are more flexible and deeply integrated than competitors, allowing for complex, cross-functional workflows without coding. Monday.com's AI blocks provide ready-made AI actions for automated text summarization, content classification, sentiment analysis, and data extraction without complex setup.

Stress Testing the Neural Networks

Theory is cheap. Operational reality is expensive. Over the past 90 days, I subjected all three platforms to identical stress tests designed to expose how these AIs perform under real-world conditions. Not marketing demos. Not cherry-picked examples. Actual messy, ambiguous scenarios that break most automation.

Test One: The Where Is It Query

Scenario: Three months ago, your team made a critical decision about whether to pivot Product Feature X based on customer feedback. You need to find that decision, understand the rationale, and identify who was blocking the change.

The Prompt: "What is the status of the Product Feature X pivot decision and who was blocking it?"

Notion AI Performance: Response time: 8 seconds. The AI returned a synthesized answer pulling from three different document sources—a project brief, meeting notes, and a comment thread in a database. Notion AI's natural language queries work across multiple databases and pages simultaneously, with contextual results that prioritize information based on your current project and recent activity patterns. Accuracy: 85%. The AI correctly identified the blocker but missed nuanced context from a Slack conversation (Slack wasn't connected to the workspace during testing).

What impressed: The AI surfaced related documents I'd forgotten existed. It understood "pivot decision" required looking at strategic planning docs, not just task status updates. The cross-database search capabilities meant I didn't need to remember which database housed the feature request.

What failed: No proactive flagging of conflicting information. Two documents had slightly different versions of the decision rationale, and the AI simply combined them without noting the discrepancy.

ClickUp Brain Performance: Response time: 4 seconds. ClickUp Brain indexes tasks, docs, comments, and connected third-party apps as soon as it's enabled, returning cited answers pulled from the exact doc or task needed. The AI Knowledge Manager returned a direct link to the relevant task, plus excerpts from three related comments and two linked docs. Accuracy: 95%.

What impressed: ClickUp Brain didn't just find the information—it provided a mini-timeline of decision evolution by pulling sequential comments from the task thread. The "Neural Connector" reputation is earned. During testing with a knowledge base containing thousands of documents, ClickUp consistently surfaced relevant information quickly, delivering tangible time savings for teams struggling with information overload. The AI explicitly cited sources, so verification was trivial.

What failed: The answer was comprehensive but verbose. Where Notion synthesized information into a narrative, ClickUp provided a data dump of relevant excerpts. For quick decisions, you need to parse more raw information.

Monday AI Performance: Response time: 12 seconds. The AI struggled with this query because the decision wasn't captured as structured data on a board. It eventually surfaced the relevant board item but couldn't access the detailed rationale buried in update threads. Accuracy: 60%.

What impressed: Once it found the board item, the AI provided excellent structured metadata—assignee, due date, status history, and linked dependencies. Monday.com's AI excels at categorizing data at scale and organizing it by type, urgency, or sentiment.

What failed: While Monday.com facilitates team collaboration through real-time updates and contextual communication, it doesn't include native chat or video conferencing, and its AI isn't designed for deep document synthesis. If information wasn't captured as structured board data, the AI couldn't surface it effectively. This is fundamentally a CRM-style AI optimized for structured workflows, not tribal knowledge retrieval.

Winner: ClickUp Brain for comprehensive cross-context retrieval. Notion AI as a close second for document-heavy environments.

Test Two: The Messy Meeting Transcript

Scenario: You've just finished a 60-minute brainstorming session that went off the rails. The transcript is 8,000 words of crosstalk, half-formed ideas, and competing priorities. You need a structured task list with assignees, due dates, and clear deliverables.

The Prompt: Upload transcript. "Create a structured project plan with tasks, assignees, priorities, and due dates from this meeting."

Notion AI Performance: The Agents feature shined here. Notion's agent draws on all user pages and databases as context, automatically generating notes and analysis for meetings, competitor evaluation reports, and feedback landing pages. Within 90 seconds, the AI generated a new database with 12 tasks, each with custom properties for priority, status, assignee, and estimated effort. Accuracy: 80%.

What impressed: The AI understood implicit dependencies. When the transcript mentioned "we can't start X until Y is done," it flagged those relationships in the notes field. AI Custom Autofill Properties automatically extract specific data, perform risk assessments, generate SEO optimizations, and categorize content across entire databases. The output was immediately usable—I could assign tasks to team members without restructuring.

What failed: Date inference was weak. When someone said "let's target end of Q2," the AI didn't calculate an actual due date. Manual cleanup required. The AI also created tasks for discussion points that weren't actual action items, inflating the task count.

ClickUp Brain Performance: ClickUp's AI Notetaker feature captures and summarizes conversations, integrating with ClickUp Calendar and extracting action items automatically. Processing time: 45 seconds. The AI generated 8 tasks with granular subtasks, pre-assigned team members based on role mentions in the transcript, and calculated realistic due dates based on mentioned timeframes. Accuracy: 90%.

What impressed: This was the most operationally useful output. Each task came with auto-generated subtasks. For example, "Launch marketing campaign" was broken into "Draft copy," "Design assets," "Set up analytics," and "Schedule social posts." When testing task generation from main objectives like 'Launch new mobile app feature,' ClickUp suggested logical steps and populated task properties such as priority and assignee. The AI even flagged blockers mentioned in conversation.

What failed: Overthinking. The AI created a few subtasks that weren't explicitly discussed, based on its interpretation of "standard process." While often correct, this required validation to ensure we weren't automating assumptions.

Monday AI Performance: Monday.com's AI Form Builder creates sophisticated forms through conversational prompts, generating complete forms with appropriate question types, conditional logic, and required field validations in minutes. However, transcript-to-task conversion isn't its strength. Processing time: 2 minutes (required prompting the AI multiple times to refine output). The AI created a board with 10 items but struggled with nuanced context. Accuracy: 70%.

What impressed: Once I refined the output through iterative prompting, the visual board structure was immediately clear. Color-coded status columns, owner assignments, and timeline views made project tracking effortless. The AI also automatically set up automation rules like "notify owner when status changes to blocked."

What failed: Initial output required too much human intervention. The AI couldn't disambiguate between discussion and decisions. While monday magic introduces a natural language interface for creating workflows where users describe needs in a single sentence and the platform auto-generates a structured board, the AI's interpretation of nuanced business requests and output alignment depends on iterative refinement.

Winner: ClickUp Brain for execution-ready task generation. Notion AI for teams that prefer database flexibility over prescriptive structure.

Test Three: Database Autofill at Scale

Scenario: You have 100 raw entries in a customer feedback database. Each entry is unstructured text—complaints, feature requests, bug reports, and praise all mixed together. You need automated categorization (sentiment, category, priority), summaries, and extracted action items.

The Prompt: "Categorize all entries by sentiment, topic, and urgency. Generate summaries and extract action items."

Notion AI Performance: This is where Notion's database-first architecture dominates. AI Custom Autofill Properties can extract specific data, perform risk assessments, generate SEO optimizations, and categorize content automatically across entire databases, with Auto-update on page edits meaning properties continuously evolve as content changes. Setup: 10 minutes to configure AI properties. Processing: 3 minutes for all 100 entries. Accuracy: 88%.

What impressed: The AI created three new columns—sentiment (positive/negative/neutral/mixed), category (bug/feature request/praise/complaint), and urgency score (1-5). Each populated automatically. Summaries were concise and captured the core issue. Action items were extracted into a separate linked database. The "Auto-update on page edits" feature meant if I modified an entry, all AI properties recalculated automatically.

What failed: Some nuanced sentiments were misclassified. One entry that was technically positive but expressed frustration was marked as fully positive. The AI also struggled with entries that discussed multiple topics—it picked the dominant theme but didn't flag multi-category cases.

ClickUp Brain Performance: ClickUp's AI Custom Fields automatically generate content like data points, summaries, translations, or action items directly within tasks, with every AI field being a customizable prompt that can trigger only at specific events. Setup: 5 minutes. Processing: 4 minutes. Accuracy: 85%.

What impressed: ClickUp's approach uses AI Custom Fields rather than properties. I configured prompts like "Extract sentiment from this customer feedback" and "Assign priority 1-5 based on urgency and business impact." The AI not only categorized but provided reasoning—a priority 5 came with a note explaining why (e.g., "Critical bug affecting 30% of users"). This transparency helped validate AI decisions.

What failed: No automatic batch processing. I had to trigger AI field updates manually for all 100 entries at once using an automation, which felt like a workaround rather than native functionality. The AI also lacked Notion's "cascading intelligence"—updates didn't retrigger related calculations.

Monday AI Performance: Monday.com's AI capabilities include categorizing data at scale, automatically determining which label is the best match based on text in designated fields. Setup: 2 minutes using AI Board Suggestions. Processing: 2 minutes. Accuracy: 82%.

What impressed: Speed and simplicity. Monday's AI suggested pre-built columns for sentiment analysis and text summarization. AI Board Suggestions generate suggestions for AI-powered columns to add to your board, updating automatically whenever you add new items and showing an estimate of how many hours you'll save each month. One click activated them. For teams with low technical overhead, this is gold.

What failed: Less customization depth. The sentiment analysis was binary (positive/negative), missing the nuanced "mixed" category that appeared frequently. Summaries were formulaic—often just the first sentence of the entry. Action item extraction wasn't available as a pre-built option, requiring custom AI prompts that felt tacked on rather than integrated.

Winner: Notion AI for sophisticated, self-improving database intelligence. Monday AI for teams that value speed over customization depth.

The Final Verdict Choosing Your Central Nervous System

After 90 days of operational testing, one truth emerges: there is no universal winner. Each platform excels at a specific operational philosophy.

Winner for Document Heavy Knowledge Based Teams

Notion AI is the obvious choice if your organization's competitive advantage lives in institutional knowledge rather than task velocity. Notion 3.0 Agents can complete multiple actions simultaneously, creating finished pages, databases, and reports directly in your workspace, understanding your work and taking action because it's built into the same platform where you store all documentation.

When Notion AI wins decisively:

- Your team references internal documentation constantly (onboarding guides, technical specs, strategy memos)

- Projects require synthesizing information from dozens of historical documents

- You need AI that understands conceptual relationships, not just structured task data

- Your workflow emphasizes written artifacts over rapid task execution

Notion's AI capabilities dominate the collaborative workspace market with a 55.89% market share, largely because its AI isn't a feature bolted onto a task manager—it's the connective tissue of a knowledge management system. The database-first architecture means every piece of information is queryable, relatable, and synthesizable.

The operational tradeoff: Implementation friction. Notion hands you Lego blocks and says "build your system." Teams with dedicated operations managers who can architect database structures will unlock exponential value. Teams expecting plug-and-play solutions will struggle. New free plan users get approximately 20 AI responses per workspace but cannot purchase full AI access separately—full AI features now require upgrading to Business plan at $15/user/month, making it a significant investment for smaller teams.

Winner for High Velocity Task Based Operations

ClickUp Brain is built for organizations where execution speed determines survival. Brain saves users time by automating busywork and simplifying execution, with 86% estimated cost savings compared to purchasing list-priced comparable products.

When ClickUp Brain wins decisively:

- Your team manages 50+ active projects simultaneously with cross-functional dependencies

- Daily standups, sprint retrospectives, and status reports consume excessive PM time

- You need AI that connects tasks, comments, docs, and external tools in real-time queries

- Automation of repetitive status updates is mission-critical

ClickUp Brain comprises three distinct yet complementary products: AI Knowledge Manager for contextual answers from docs, tasks, and projects; AI Project Manager for managing and automating work including progress reports and standups; and AI Writer for Work with role-based prompts suited for specific job needs. This architectural separation means each capability is purpose-built rather than generic.

The "Neural Network for Work" positioning isn't marketing hyperbole. As soon as you enable ClickUp Brain it starts indexing tasks, docs, comments, and connected third-party apps, with the Knowledge Manager returning cited answers pulled from exact docs or tasks. Ask "What's blocking Project Phoenix?" and you get task-level precision with comment context and doc references—all in under 5 seconds.

The operational tradeoff: Feature overwhelm. ClickUp is a maximalist platform. Every possible PM capability exists somewhere in the interface. For large organizations with diverse team needs, this flexibility is powerful. For small teams wanting simplicity, it's paralyzing. ClickUp Brain is not included in standard subscription tiers and is available as a paid add-on for approximately $9 per member per month on top of any paid plan.

Winner for Structured CRM Style Workflows

Monday AI excels when your work resembles structured processes more than creative knowledge work. Throughout 2025, Monday.com has aggressively integrated AI across the platform, positioning itself as an AI-first work operating system with capabilities that significantly reduce manual work and enhance decision-making.

When Monday AI wins decisively:

- Your team operates in repeatable, template-driven workflows (sales pipelines, event management, client onboarding)

- Visual board interfaces align with how your team naturally thinks about work

- You need AI that turns messy requests into structured processes instantly

- Compliance, audit trails, and data governance are critical requirements

monday magic uses natural language processing to interpret workflow requirements and automatically generates complete, functional workspaces with boards, columns, dashboards, forms, and AI-powered features all structured based on industry best practices. Describe "I need to manage a product launch with marketing, engineering, and sales coordination," and within 2 minutes you have a multi-board workspace with pre-built automations.

The "Task Architect" positioning reflects Monday's core strength: taking chaos and imposing structure. Monday.com's AI can categorize data at scale, automatically extract details from PDFs and documents, identify emotional cues from text for sentiment analysis, and summarize complex topics to extract key points. This makes it exceptional for operations teams managing standardized processes.

The operational tradeoff: Shallow documentation capabilities. Monday is designed for structured data, not long-form knowledge. The platform doesn't include native chat or video conferencing and provides robust asynchronous collaboration features integrated directly into work items, but discussions stay contextual rather than scattered. If your team's intelligence lives in documents rather than boards, Monday AI will feel constraining.

Pricing advantage: AI capabilities are automatically enabled in all Monday.com accounts with trial credits provided and additional credits purchased as needed, making it accessible for testing without upfront commitment.

The Practical Reality Nobody Discusses

Here's what no marketing site will tell you: all three platforms require operational discipline to deliver value. The AI doesn't magically organize your chaos. It amplifies your existing systems.

If your organization has no documentation culture, Notion AI will sit unused because there's nothing to search. If your team doesn't update task status reliably, ClickUp Brain's automated standups will report garbage data. If you haven't standardized workflows, Monday AI's automation suggestions will feel generic and unhelpful.

The uncomfortable truth is that AI in project management reveals organizational debt. The platforms that feel "hard to implement" often require you to define processes you've been running informally for years. The platforms that feel "easy" often automate bad habits.

The decision isn't just about features. It's about what operational transformation you're willing to commit to. Notion forces you to think in systems and relationships. ClickUp forces you to define explicit workflows and task dependencies. Monday forces you to structure ambiguity into repeatable processes.

Choose the AI that aligns with the operational discipline your organization is ready to adopt—not the one with the longest feature list.

Conclusion

AI isn't replacing project managers. It's replacing the busywork that keeps project managers from doing actual project management. AI shifts work management from reacting to problems to predicting them, spotting risks and bottlenecks before they impact delivery, while smarter workforce optimization helps teams balance capacity, prevent burnout, and match the right skills to the right work.

The three platforms we've examined represent divergent philosophies about what "AI-powered project management" means. Notion AI is the organizational brain that synthesizes institutional knowledge. ClickUp Brain is the neural network that connects disparate work contexts. Monday AI is the architect that imposes structure on chaos.

Your choice depends on a single question: Is your organization's bottleneck finding information, executing tasks, or structuring processes?

Answer that honestly, and the right platform becomes obvious. Answer it incorrectly, and you'll spend the next two years fighting your tools instead of using them.

The Q&A Test

Before committing to any platform, run this operational stress test. Open your current project management tool and ask this question:

"What was the outcome of the Project [X] decision we made three months ago, who was responsible for implementation, and what blockers did they encounter?"

If your AI can answer this accurately in under 10 seconds with cited sources, you have a functioning organizational memory. If it can't, you don't have a project management problem—you have a context crisis.

The right AI won't solve this overnight. But it will transform how your team captures, retrieves, and acts on institutional knowledge. That transformation is worth infinitely more than another email draft generator.

Choose the nervous system that fits your organizational biology. Then commit to the operational discipline required to make it work.