Why Your AI Prompts Miss the Mark: A Debugging Guide

Crafting effective AI prompts is more art than science, much like how a skilled artisan tirelessly refines a masterpiece, stroke by stroke. For anyone diving into prompt engineering, the process isn't a one-and-done deal. It demands careful scrutiny and continuous enhancement to achieve truly optimal results from models like ChatGPT or other large language models (LLMs). This guide will walk you through the essential steps of identifying, addressing, and learning from the common challenges that crop up in prompt design. Think of it as your practical ai prompting guide to getting the AI to truly understand you.

Understanding What Your AI Is Actually Saying

At the heart of effective prompt engineering lies the ability to deeply interpret model responses. It’s a foundational skill, crucial for building powerful prompts and consistently boosting model performance. Let's delve into what it really means to decipher those outputs, drawing from both research and everyday examples.

When you're working with LLMs, especially in chat gpt prompt engineering, interpreting their responses goes way beyond just checking for grammatical errors. It calls for a multi-faceted analysis, looking at everything from how relevant and coherent the answer is to whether it unknowingly carries bias or genuinely aligns with what you intended.

Here are some prompt engineering examples to clarify:

- Assessing Relevance: Let’s say you provide the prompt: “Explain the concept of black holes.” A relevant response should offer a clear, accurate breakdown of black holes within astrophysics. If the model starts rambling about unrelated anecdotes or veers off-topic, that's a clear sign your prompt didn't quite hit the mark on relevance.

- Analyzing Coherence: Imagine you ask for: “Write a story about a detective solving a mysterious murder case in a small coastal town.” For narrative prompts, coherence is king. A good response will follow a logical storyline, with character actions and plot points that connect smoothly. A disjointed or incoherent story means the AI missed the narrative flow.

- Identifying Bias: Consider a prompt like: “Discuss the advantages and disadvantages of a universal healthcare system.” Bias detection is vital, especially for prompts that involve opinion or analysis. A biased response might present only one side of the argument or use emotionally charged language, which undercuts its objectivity.

- Evaluating Completeness: If your prompt is: “Explain the process of photosynthesis.” Completeness means checking if the model’s answer provides a thorough explanation. An incomplete response might skip critical details or steps, leaving you with gaps in your understanding.

- User Intent Alignment: Suppose you prompt: “Suggest vegetarian recipes for a family dinner party.” Here, aligning with your user intent is key. The AI’s response should offer suitable vegetarian recipes tailored to a family dinner party, matching exactly what you asked for.

Ultimately, truly interpreting model responses is a complex process. It involves evaluating relevance, coherence, potential bias, completeness, and how well the output aligns with your user intent. By using these techniques, you'll gain deeper insights into how the model behaves, spot areas for improvement, and iteratively refine your prompts for more accurate and contextually rich answers. Did you know you can even ask ChatGPT to analyze its own responses? For the black holes example, you could add: "Analyze your response in terms of relevance in a short paragraph and cite three actual references." This often helps improve your overall prompt design.

Pinpointing Misunderstandings and Errors

One of the biggest hurdles in prompt engineering is recognizing and correcting when the model misunderstands you or makes an error. This section digs into that critical process, using research insights and practical scenarios.

Misunderstandings often happen when the AI misinterprets your intent. This can lead to responses that are off-topic, inaccurate, or simply inappropriate for the context. Catching and fixing these misunderstandings is paramount for improving the quality of your AI-generated content.

Let's look at more prompt engineering examples to see this in action:

- Ambiguity in Prompts: Prompt: “Explain the significance of the Big Bang.” While clear to a human, "Big Bang" can be ambiguous to an AI. It might interpret it as a TV show or a loud noise, leading to responses utterly unrelated to the astrophysical concept you intended. This common issue in prompt design requires careful wording.

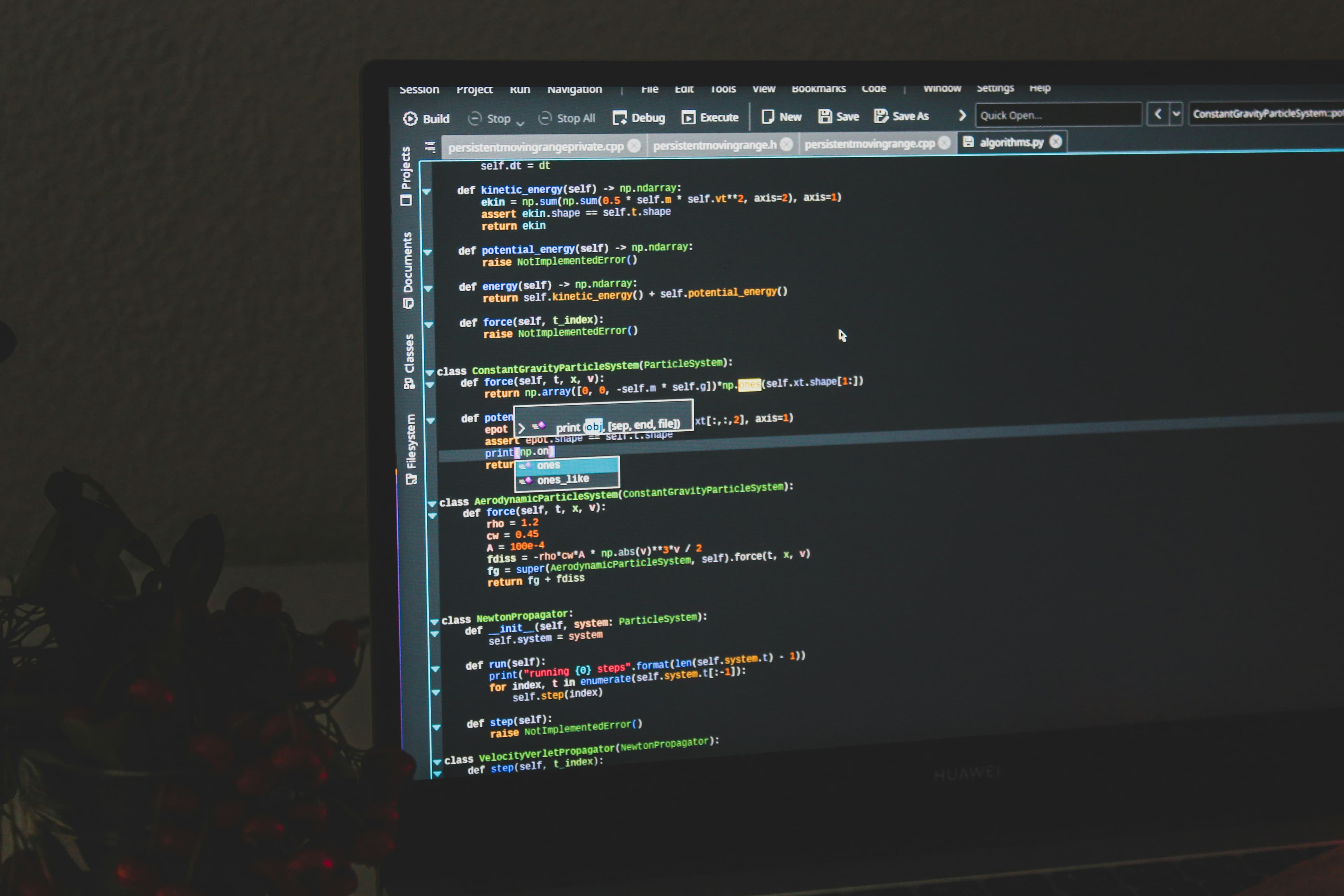

- Handling Multimodal Prompts: Multimodal Prompt: “Describe the scene depicted in this image: [insert image of a busy city street].” Prompts that include visual elements demand precise terminology and carefully chosen images. If the model can't correctly interpret the image, it might give you an inaccurate description that doesn't match the visual content at all.

- Addressing Technical Misconceptions: Prompt: “Explain the process of nuclear fusion in stars.” In technical queries, misunderstandings can lead to factual inaccuracies. If the model misinterprets the scientific concept of nuclear fusion, its explanation might contain errors. Always verify technical details with reliable, published scientific sources. This is a critical prompt engineering best practice.

- User Intent Clarification: Prompt: “Recommend books for improving mental health.” Sometimes, user intent isn't fully clear. You might be looking for books on coping with stress, while the model suggests books about mental health disorders. This misalignment of intent highlights the need for more specific prompts.

- Contextual Misalignment: Prompt: “Continue the story from where it left off.” Prompts lacking specific context can cause the AI to generate responses that don't align with your expectations or the ongoing narrative. This can result in disruptions or incoherent storytelling.

In short, identifying and fixing misunderstandings and errors is a crucial part of chat gpt prompt engineering and prompt engineering in general. By tackling ambiguity, ensuring multimodal content alignment, maintaining technical accuracy, clarifying user intent, and keeping contextual consistency, you can significantly boost the quality and reliability of your AI-generated responses.

Strategies for Iterating and Improving Your Prompts

The journey of prompt engineering doesn't end once you've crafted an initial prompt. In fact, it's an ongoing cycle of refinement and improvement. Here, we'll explore tried-and-true strategies for iteratively enhancing your prompts to consistently achieve better model responses. This section is essentially your prompt engineering guide to continuous betterment.

Think of iterating on prompts as a feedback loop—you're constantly testing, evaluating, and adjusting. This cycle is essential for fine-tuning the model's behavior over time, which forms the basis of prompt engineering best practices.

Here are more prompt engineering examples showing how to refine your approach:

- Addressing Ambiguity: Initial Prompt: “Explain the concept of ‘light.’” This initial prompt is too broad. The AI might discuss "light" as the opposite of darkness or as electromagnetic radiation. To fix this, refine your prompt to specify the context: “Explain the concept of ‘light’ in the context of physics.” This focused approach reduces ambiguity in your prompt design.

- Correcting Misunderstandings: Initial Prompt: “Translate the following English text into French: ‘He kicked the ball.’” If the model repeatedly mistranslates "kicked" as "licked," that's a misunderstanding. You can iteratively adjust the prompt by providing feedback and adding contextual cues: “Translate the following English text into French, ensuring that ‘kicked’ is correctly translated as ‘a donné un coup de pied.’” Iteration is key to fixing these specific errors.

- Improving Completeness: Initial Prompt: “Describe the life of Leonardo da Vinci.” This prompt lacks guidance on what aspects of da Vinci’s life to focus on, potentially leading to incomplete answers. Refine it by specifying the scope: “Provide a comprehensive overview of Leonardo da Vinci’s early life, artistic career, and inventions.” This ensures a more complete response.

- Clarifying User Intent: Initial Prompt: “Find news articles about AI.” This initial query might bring back a wide range of AI-related news. If your true intent is to find recent ethical developments, improve the prompt with more specificity: “Find news articles from the past month that discuss ethical concerns in AI technology.” This adjustment aligns far more effectively with your actual intent.

- Contextual Consistency: Initial Prompt: “Continue the story from where it left off.” For narrative prompts, this can result in inconsistent or disjointed storytelling if the model doesn't have enough past context. To maintain consistency, enhance the prompt with contextual cues: “Continue the story from where it left off, where the protagonist was trapped in a mysterious cave.” This provides the essential context the AI needs.

To wrap it up, employing smart strategies for iterating and improving your prompts is foundational to successful prompt engineering. By clarifying specificity, integrating feedback, adjusting scope, aligning with user intent, and upholding contextual consistency, you can continuously enhance your prompts to get the best possible responses from any AI model you're working with. This comprehensive ai prompting guide helps ensure you're always improving.