AI's New Reality: Fake Faces, Blurred Truths

Remember that viral website where you could refresh the page and see a new, incredibly lifelike photo of a person who didn't actually exist? It felt a little unsettling, almost like looking at a ghost. These images, crafted by sophisticated generative AI models called Generative Adversarial Networks (GANs), offer a stark glimpse into how what is generative AI is reshaping our world. This isn't just about cool tech; it's about how artificial intelligence is changing everything from our concept of reality to the very nature of human memory and identity.

Modularity: The Unseen Architect of Our Digital World

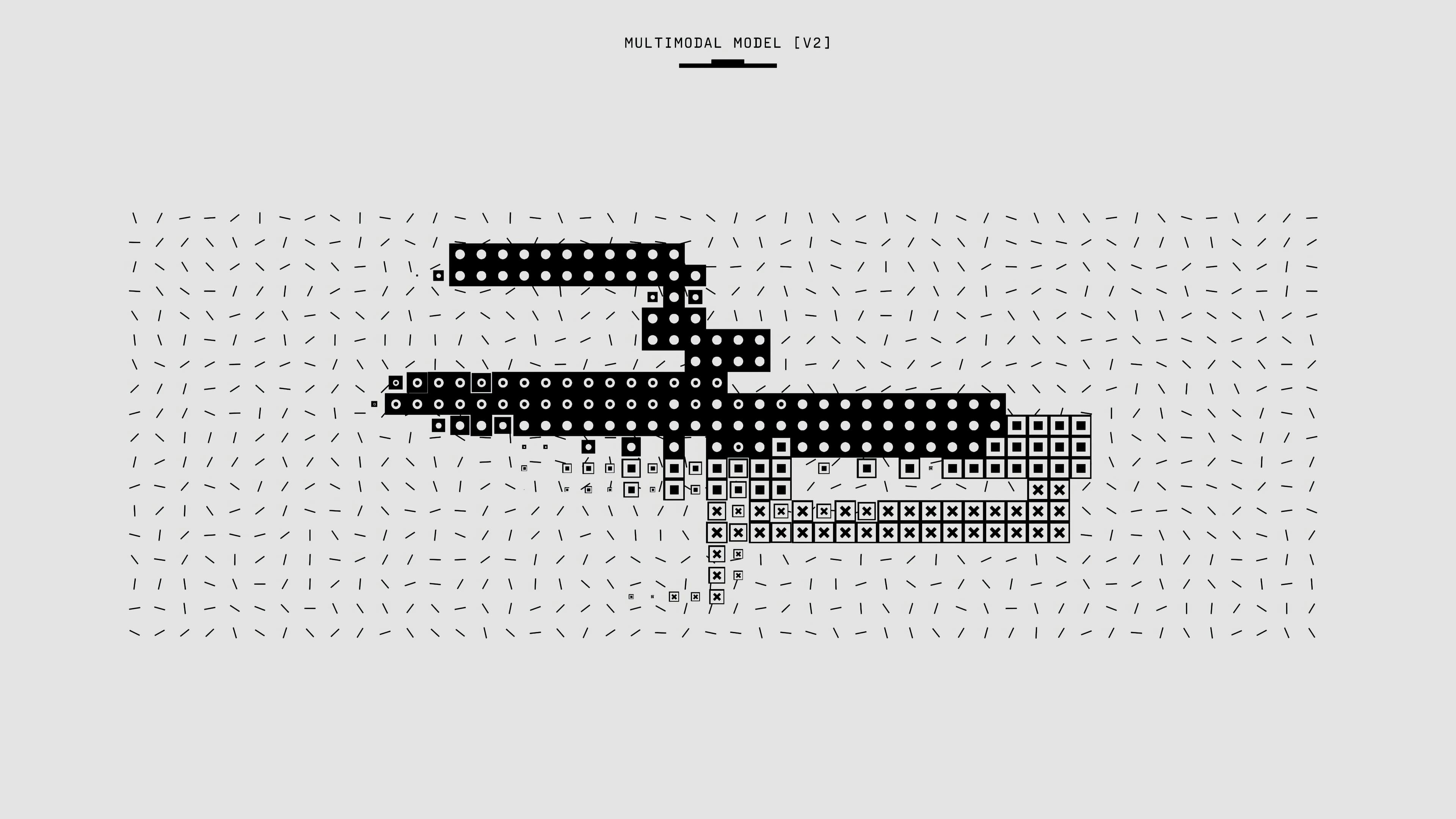

At its heart, the magic behind these non-existent faces is a principle called modularity. Think of it like building with LEGOs: complex structures are made from interchangeable, reconfigurable parts. In the digital realm, modularity is foundational. It’s how systems like Google, Facebook, and Twitter organize their algorithms. These platforms use machine learning to analyze countless discrete units of data – your clicks, interactions, and messages – to select the content you see.

Historically, this idea isn't new. From the movable type of the Gutenberg press to the interchangeable parts of early American manufacturing, modularity has driven efficiency and complexity. In the computing world, this approach blossomed. Early machines like ENIAC, developed during WWII, broke down complex calculations into repeatable, automated steps. Today, this principle underpins the "soft labor" economy, where intellectual tasks, once distinct from physical work, are increasingly automated and streamlined.

When AI Creates — And Deceives

The "This Person Does Not Exist" project is a perfect example of modularity in action with generative AI tools. The GAN algorithm analyzes thousands of real photos, identifies patterns, and then creates entirely new, unique portraits. The photos it produces are not records of actual people; they're digital composites derived from an analysis of existing photographic data. This creates a "meta" level of representation, where the images aren't directly indexical (pointing to a real-world thing) but rather abstract resemblances.

This detachment from physical reality raises profound questions. When generative AI models can craft such convincing fictions, our traditional understanding of what's real begins to blur. This isn't just an artistic curiosity; it touches on the concept of AI hallucination, where algorithms generate content that seems plausible but has no basis in fact. Imagine a future where distinguishing between AI-generated content and genuine human experience becomes incredibly difficult.

Memory in the Age of Algorithms

This blurring extends to our understanding of memory itself. Science fiction, always ahead of its time, has long explored this. Take Blade Runner, for instance, where memories are treated as data that can be implanted in androids. The film asks: if memory is just a collection of modules, can it be reverse-engineered, reproduced, or even faked?

In reality, cognitive psychology has shown that human memory isn't a perfect recording device. Instead, it's a reconstructive process, piecing together fragments each time we recall an event. Much like an archaeologist reconstructing a dinosaur from scattered bones, our memories can be incomplete, modified, or even fabricated over time. This inherent unreliability contrasts sharply with the digital ideal of perfect recall. A stored computer file, uncorrupted, retrieves information exactly as it was saved.

The rise of AI, including AI ChatGPT and other advanced language models, further complicates this. If AI can generate convincing narratives and "remember" vast datasets, how does this redefine our own cognitive processes? Initially, our understanding of computer memory was modeled after human memory. Now, the influence flows both ways, with AI pushing us to rethink how we perceive and value our own internal archives.

Remixing Reality: AI's Cultural Footprint

Remix culture, where existing elements are recombined to create new works, thrives on modularity. From classic music sampling and collage art to modern deepfakes in film and AI voiceovers (like those used to emulate Anthony Bourdain or Andy Warhol), machine learning is amplifying this process. AI can take fragments of existing material and repurpose them into something novel, sometimes unrecognizable from its source. It streamlines what was once a creative, human-centric act of cultural citation or material sampling.

This leads to interesting ethical dilemmas. When generative AI tools can create highly convincing "fake" content—whether it's an image, a voice, or a video—the lines between original and copy, truth and fiction, become increasingly indecipherable. This has profound implications for trust and authenticity in our hyper-connected world.

The Human, The Machine, and The Posthuman Question

As AI becomes more sophisticated, moving towards self-training and even forms of "agential" decision-making, it pushes the boundaries of what it means to be human. This concept is explored in posthumanism, which examines the blurring lines between humans, machines, and nature. Films like 2001: A Space Odyssey, with its sentient AI HAL 9000, challenge us to consider if a machine can possess its own will or "body." The idea of agentic AI suggests systems that can make decisions and learn autonomously, raising questions about consciousness and the nature of knowledge itself. The very agentic AI definition is still evolving, but it points to a future where AI's role extends beyond mere tool.

This shift isn't without its darker side. The pervasive influence of AI, especially in social media platforms, has contributed to "echo chambers" and the spread of misinformation. Algorithms, often designed for engagement and profit, can inadvertently reinforce existing biases and insulate users within comfortable, unchallenged worldviews. We see this play out in real-world scenarios, from political polarization in the US to misinformation campaigns during international conflicts. The bias observed in GANs, for example, often privileging certain demographics (like the predominance of white faces in "This Person Does Not Exist"), highlights how algorithmic biases can reflect and amplify societal inequalities, with real consequences for areas like judicial systems. This demonstrates how even generative AI for business can have unintended societal impacts.

The Future of Memory and Identity

What if our memories could be stored in the cloud, perfectly preserved and accessible at will? This "metamemory," where AI could reconstruct and archive our experiences outside the human brain, is no longer purely speculative. While still in early stages, research explores translating brain activity into images, promising a future of exponentially increased accuracy in memory recall, free from human fallibility. This concept, explored in sci-fi classics like Strange Days and more recently in Black Mirror, means memories could become digital files, endlessly replicable and re-experiencable.

However, this also presents a paradox: if copies are identical, what happens to the "original" memory? As we integrate powerful systems like AI ChatGPT into our daily lives, these questions become more pressing. Will we become more detached from our physical world, seeking comfort in simulated realities designed to fit our ideological tendencies, as the concept of the metaverse suggests?

Understanding how generative AI is deliberately constructing a world that could further detach us from diversity and truth is an existential challenge. Modularity, as a binding force for ongoing innovation, means constant change. It’s up to us to ensure that this change fosters a future that remains fair, diverse, and connected to our shared reality, rather than a homogeneous, algorithmically-curated illusion.