Building Trust in AI Starts with Ethics and Security

Answering the question of "?" is getting more complex every day. We're seeing it pop up everywhere, from healthcare and finance to our creative tools. But as this technology grows more powerful, a crucial conversation is bubbling to the surface, one that balances the excitement of innovation with the weight of responsibility. It's not just about what artificial intelligence can do; it's about how we ensure it's developed ethically and securely. This means building systems that are aligned with human values while also being strong enough to protect user privacy and fend off threats.

At its core, this challenge splits into two main areas: ethics and security. Think of ethics as the moral compass guiding how are designed and used. It's about fairness, transparency, and accountability. We need to make sure AI doesn't amplify existing human biases or violate individual rights. On the other side, security is the shield. It's about protecting AI systems from anything that could compromise their data or reliability, like malicious attacks or even simple malfunctions. Finding the right balance between pushing the boundaries of technology and ensuring it’s used responsibly is the central challenge for anyone working with .

The Ethical Landscape of AI

When we talk about AI ethics, we're really talking about building trust. For people to accept and rely on AI systems, they need to believe those systems will operate fairly and honestly. This boils down to three key pillars: fairness, transparency, and accountability.

- : An AI is only as good as the data it’s trained on. If that data reflects societal biases around race, gender, or age, the AI will learn and even amplify those biases. True fairness means actively working to identify and clean out these discriminatory patterns from the datasets and algorithms that power models.

- : This is about being able to peek under the hood. If an AI system denies someone a loan or makes a critical diagnosis, we need to understand why. Transparency means making the logic behind an AI's decisions clear and understandable to the people it affects.

- : When an AI gets something wrong, who is responsible? Accountability means having clear systems in place to address errors or harm caused by AI. It ensures that developers and the companies deploying the technology are answerable for its impact on people and society.

Beyond these principles, ethical design also demands a hard look at the broader societal impact. We have to consider how automation affects jobs, how AI-powered surveillance impacts privacy, and even how these systems might influence our social and political conversations.

AI's Security Blind Spots

Integrating AI into our critical systems creates incredible efficiencies, but it also opens the door to new and significant security risks. As become more common, protecting them becomes a top priority. The challenges generally fall into a few key areas.

First, there's data privacy. AI systems, especially in , are hungry for data, and that often includes sensitive personal information. A breach in a healthcare or financial AI doesn't just leak data; it can shatter patient trust and lead to massive legal and financial penalties. We saw this happen when a healthcare provider’s AI data repository was breached due to weak security, exposing countless sensitive patient records.

Second, AI models are vulnerable to specific kinds of attacks. One of the most talked-about is the "adversarial attack," where an attacker makes tiny, almost invisible changes to input data to trick the model into making a mistake. Imagine a financial firm’s AI trading system being manipulated this way, causing it to make erratic trades and lose millions. It highlights just how fragile these complex systems can be.

Finally, there’s the question of system integrity. We need to be sure that an AI system is doing exactly what it's supposed to do, without hidden flaws or unintended consequences. The stakes are highest in applications like autonomous vehicles. In one case, a security flaw in a self-driving car’s decision-making algorithm was exploited, causing the vehicle to behave erratically and put passengers in danger. These aren't just technical glitches; they're serious safety risks that can be caused by anything from a design flaw to an .

Creating Guardrails with Rules and Best Practices

As the use of grows, a global conversation about regulation is taking shape. Governments and international bodies are working to create frameworks that encourage innovation while establishing clear guardrails. In Europe, regulations like the GDPR and the AI Act are setting comprehensive rules for data privacy and high-risk AI applications. In the U.S., the approach is often more specific to industries like healthcare and finance, with organizations like the National Institute of Standards and Technology (NIST) developing standards to ensure AI is trustworthy.

Beyond formal regulations, building ethical and secure AI requires a commitment to best practices from the ground up. This means involving diverse stakeholders—like ethicists and community representatives—in the development process. It also involves being transparent about how algorithms work and building in privacy from the very start, not as an afterthought. A huge part of this is actively looking for and rooting out bias in datasets to ensure the different don't perpetuate unfair outcomes.

To bolster security, organizations need a multi-layered defense. This starts with basics like data encryption and strict access controls. But it also involves more advanced strategies like designing secure AI algorithms that are resilient to attacks, running regular security audits, and having a clear incident response plan for when something goes wrong.

The Future is Both Ethical and Secure

Looking ahead, the concepts of ethics and security will become even more integrated into the AI development lifecycle. We'll likely see more government oversight and a stronger push for "ethics by design," where these considerations are part of the process from day one.

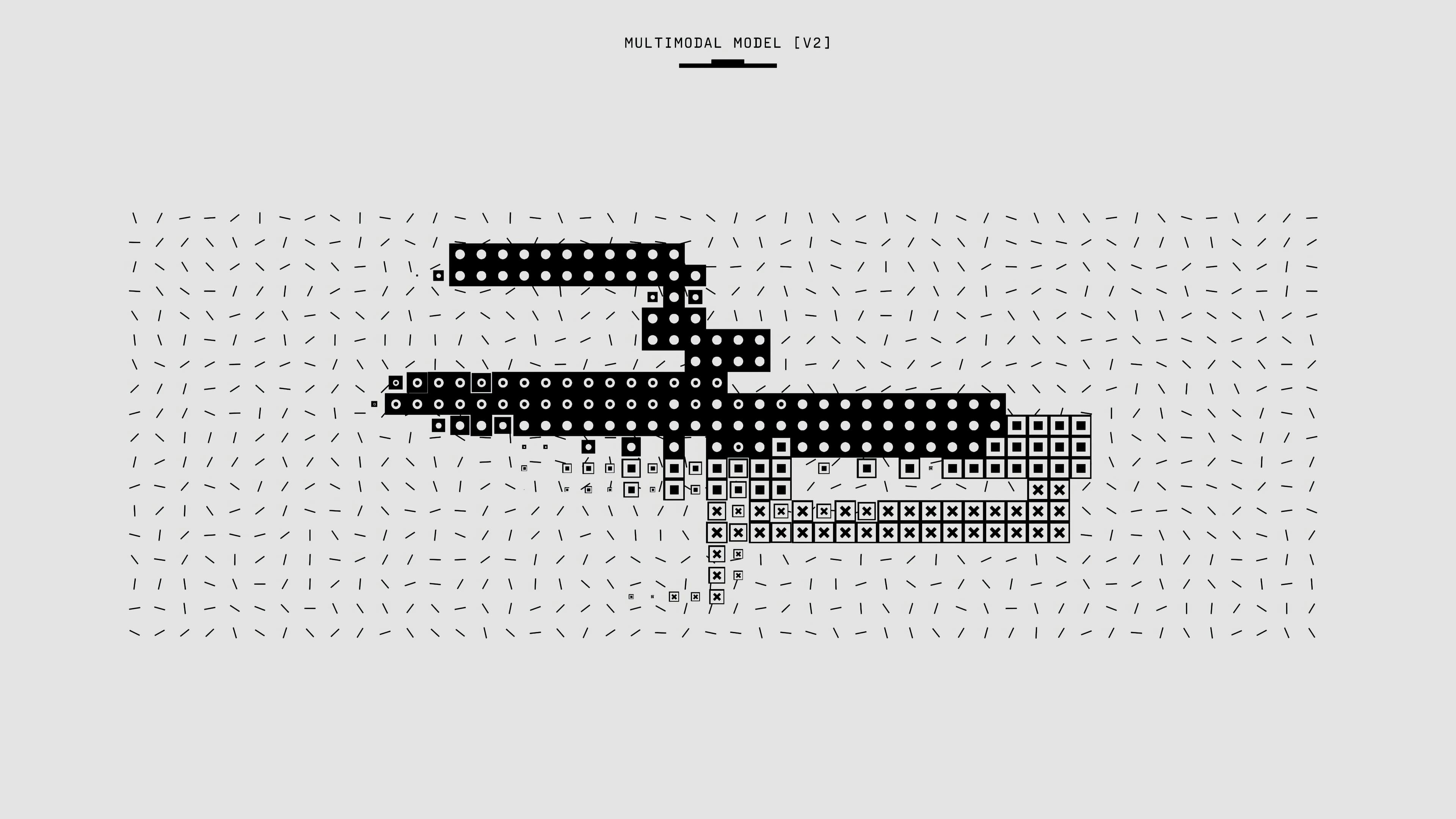

New technologies are also emerging to help tackle these challenges. For example, techniques like federated learning allow to learn from data without that data ever leaving its source, which is a huge win for privacy. Explainable AI (XAI) is helping to demystify the "black box" of AI decisions, and blockchain shows promise for creating secure and transparent records of AI operations. As we move towards more advanced systems, including , these ethical and security foundations will be more important than ever.

The path forward requires a collective effort. By focusing on ethics and security, we aren't slowing down progress; we're making it sustainable. We're building a future where we can trust AI to be a force for good—one that's innovative, inclusive, and safe for everyone.