The Hard Questions We're Not Asking About AI

When most of us try to answer the question, what is AI, we often picture the powerful tools we interact with daily. The conversation quickly turns to generative AI models that can write, create images, and analyze data at incredible speeds. But to truly understand what is generative AI, we have to look beyond the impressive capabilities and confront the serious ethical questions that come with it. As this technology becomes more woven into our lives, it’s creating complex challenges that we can’t afford to ignore.

The New Battlefield

One of the most unsettling conversations revolves around autonomous weapons systems, or “killer robots.” These are machines that could potentially identify and engage targets without a human giving the final order. This possibility creates a massive ethical problem. The idea of a machine making a life-or-death choice on a battlefield, with no direct human oversight, challenges our fundamental ideas of accountability. There’s a real fear that these systems could escalate conflicts, produce unintended outcomes, and ultimately lead to a loss of human control over warfare itself.

The implications don't stop there. The development of advanced AI weaponry is expensive, creating a divide between nations that can afford it and those that can't. This could easily spark a new kind of arms race, destabilizing global security as countries compete to build more sophisticated systems.

Constant Surveillance and Subtle Control

Beyond the battlefield, AI-powered surveillance is becoming a part of our daily lives, and it brings up serious privacy issues. These systems are capable of tracking our movements, monitoring our communications, and even predicting our behavior. While often framed as tools for security and crime prevention, they pose a significant threat to personal freedoms. What does it mean to be free when our every move is being recorded and analyzed?

This technology has the potential for misuse. For example, facial recognition could be used to profile individuals based on their beliefs or background. This creates a chilling effect on free expression, as people may become hesitant to voice their opinions if they feel they are being watched. Our digital lives are just as exposed. The same machine learning algorithms that personalize our social media feeds can also be used to shape public opinion, create echo chambers, and spread disinformation. This is one of the more troubling generative AI examples in action, as it can erode trust in our own institutions.

The Bias Inside the Machine

A critical problem we're facing with many types of AI is algorithmic bias. These systems are trained on massive amounts of data, and if that data reflects existing societal biases, the AI will learn and even amplify them. This can lead to unfair outcomes in everything from hiring and loan applications to the criminal justice system.

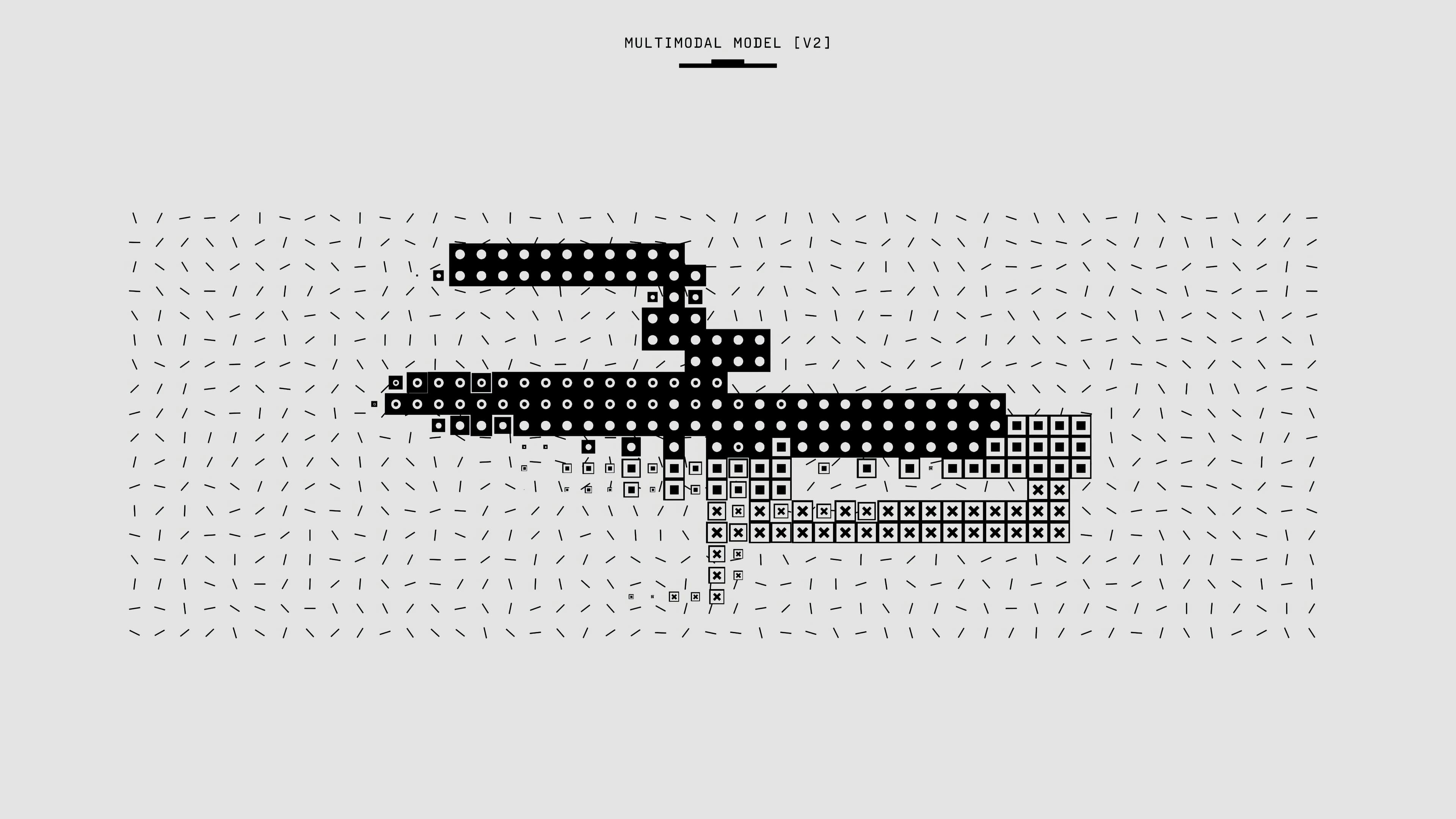

The core issue lies in the nature of machine learning itself: it learns from the world as it is, not as it should be. The challenge is to find ways to build these systems to be fair and equitable. This requires a focus on using diverse and representative data for training, as well as developing methods to audit algorithms for bias. We also see issues like AI hallucination, where a system presents confidently incorrect information, reminding us these tools aren't infallible and their outputs require critical human evaluation.

The Need for Rules and Responsibility

The rise of AI also creates unique legal challenges. Our existing laws were not designed to handle autonomous systems. Who is responsible when an AI makes a mistake? Is it the developer, the user, or the owner? These are urgent questions that need answers as technology continues to advance.

While the concerns are significant, AI does offer potential benefits. It could be used to reduce civilian casualties in conflict, improve medical diagnoses, and streamline logistics. The use of generative AI for business could unlock new efficiencies and creative potential. However, harnessing these benefits depends entirely on our ability to proceed with caution. We need to develop clear ethical frameworks for how these technologies are built and used.

Ultimately, the future of AI is not set in stone. It's a tool, and its impact—for better or worse—will be determined by the choices we make. Shaping this future requires a collective effort from policymakers, technologists, and the public to ensure these powerful systems are used responsibly. The generative AI definition we choose to live with is up to us. It demands transparency, accountability, and a global commitment to keeping human control and values at the center of this technological revolution.